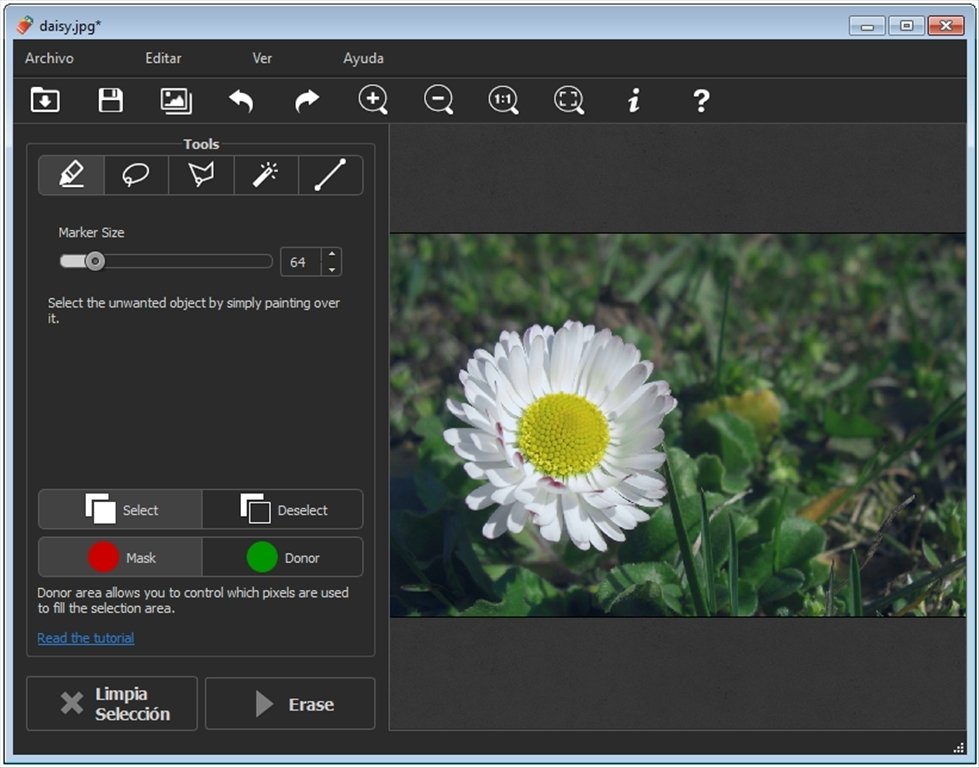

Mask more, go outside of the edges of the clothes.I've experienced that painting a little bit outside the 'lines / borders' is the best for the model, else you will get visible remnants / distortions at the edges of the mask in the generated image. You can change the size of the brush at the right of the image. MaskingNow it's time to mask / paint over all the areas you want to 'regenerate', so start painting over all clothing. Open your preferred image in the left area.Ĩ. You can use any photo manipulation software, or use ImagesStable Diffusion models work best with images with a certain resolution, so it's best to crop your images to the smallest possible area. at the edges), so the model will learn how to "fix" inconsistencies at the edges.ħ. Change masked padding, pixels this will include fewer/more pixels (e.g.Use a higher batch count to generate more images in one setting.Denoising strength: the lower the closer to the original image, the higher the more "freedom" you give to the generator.Latent Nothing: will generate no initial noise.Latent Noise: will generate new noise based on the masked content.This can be useful in some cases to keep body shape, but will more easily take over the clothes

Original: will use the masked area to make a guess to what the masked content should include.Fill: will fill in based on the prompt without looking at the masked area.EXPERIMENT: don't take this guide word-by-word.These are just recommendations / what works for me, experiment / test out yourself to see what works for you. EmbeddingsAdd the following files in the 'data/embeddings' The model file should be called uberRealisticPornMerge_urpmv12-inpainting.safetensors and the config file should be named uberRealisticPornMerge_urpmv12-inpainting.yamlĤ. Save them to the "data/StableDiffusion" folder in the Webui docker project you unzipped earlier. Go to (account required) and under Versions, click on ' URPMv1.2-inpainting', then at the right, download "Pruned Model SafeTensor" and "Config". The model file should be called realisticVisionV13_v13-inpainting.safetensors and the config file should be named realisticVisionV13_v13-inpainting.yaml Go to (account required) and under Versions, select the inpainting model (v13), then at the right, download "Pruned Model SafeTensor" and "Config". Inpainting model for nudesDownload one of the following for 'Nudifying', You should see something similar to this:ģ. To create a public link, set `share=True` in `launch()`. Note that it doesn't auto update the web UI to update, run git pull before running. To relaunch the web UI process later, run.A Python virtual environment will be created and activated using venv and any remaining missing dependencies will be automatically downloaded and installed. If you don't have any, see Downloading Stable Diffusion Models below. Place Stable Diffusion models/checkpoints you want to use into stable-diffusion-webui/models/Stable-diffusion.Clone the web UI repository by running git clone.Open a new terminal window and run brew install cmake protobuf rust git wget.Keep the terminal window open and follow the instructions under "Next steps" to add Homebrew to your PATH. If Homebrew is not installed, follow the instructions at to install it.I've chosen to use docker, since this will make it far easier to install the webui and minimal fiddling in the command prompt. (images with a resolution of < 1024px, for larger pictures, it takes longer) It does work without a Nvidia graphics card (GPU), but when using just the CPU it will take 10 to 25 minutes PER IMAGE, whereas with a GPU it will take 10 - 30 seconds per image. Non-GPU (not tested, proceed at own risk auseChamp: ) Windows 10 (64-bit): Home or Pro 21H1 (build 19043) or higher. Windows 11 (64 bit): Home or Pro version 21H2 or higher. Windows 10/11, 32 GB of RAM and a Nvidia graphics card with at least 4-6 GB VRAM (not tested by me, but reported to work), but the more the better. IntroductionThis guide will provide steps to install AUTOMATIC1111's Webui several ways.Īfter the guide, you should be able to go from the left image, to the right, Inpainting doesn't work, weird results / outputĠ.

Clicking 'generate' loads additional model / Checkpoint b3f1532ba0 not found loading fallback Cannot add middleware after an application has started

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed